How do you use AI code review today?

Every code review tool can find bugs, but can’t solve the trust problem yet.

/ultrareview shipped with Opus 4.7 and flipped that.

It finds issues and then launches reviewer agents in parallel, each reports findings, and every finding gets independently reproduced before you see it.

If the bug can't be verified, it never reaches you.

So I tried it over the weekend and it is expensive! But it works, let me show you.

Before we jump in:

DevTools of the Week

Microsoft shipped 1.0 of its agent SDK which unifies Semantic Kernel and AutoGen into one stable API surface, ships with full MCP support, and A2A 1.0 arriving imminently. It’s a production-grade multi-agent for .NET and Python with a long-term support commitment.

Google added subagents to the Gemini CLI. Define specialists as markdown files with YAML frontmatter, delegate to them with @agent_name syntax, each runs in its own context window. Clean answer to context rot in long terminal sessions.

Added Server Cards: a standard for exposing server metadata through a .well-known URL so registries and clients can discover capabilities without connecting first. Small change but big implications for MCP tool discoverability.

What /ultrareview does

Type /ultrareview on a branch or /ultrareview 42 on PR 42. Claude Code uploads the diff to its cloud review infrastructure and runs from there.

A fleet of reviewer subagents spins up in a remote sandbox.

Each reads the change from a different angle, like logic bugs, security issues, edge cases, and performance. They work in parallel and each produces its own finding list.

Then the second pass starts in which for every issue flagged, a validator subagent gets spawned.

Its job is to look at the actual code and decide if the bug is severe in the sense that it’s functionally broken.

Only verified findings come back while the rest get filtered. Anthropic's internal docs set the confidence bar at 8/10 before a finding is surfaced.

A typical review takes 5 to 10 minutes, and runs as a background task.

Pro and Max plans get 3 free runs per billing cycle, then it bills as extra usage. You need extra usage enabled before it'll launch a paid review.

The Demo

I set up a PR with seven deliberate flaws in a Python service of different types and varied detection difficulty.

I ran /review first, then /ultrareview. Some highlights include:

Flaw 1: Off-by-one in pagination

Classic page * size vs (page - 1) * size. Skips one record at every page boundary.

/review: caught it.

/ultrareview: caught it, included a reproduction showing which record gets dropped.

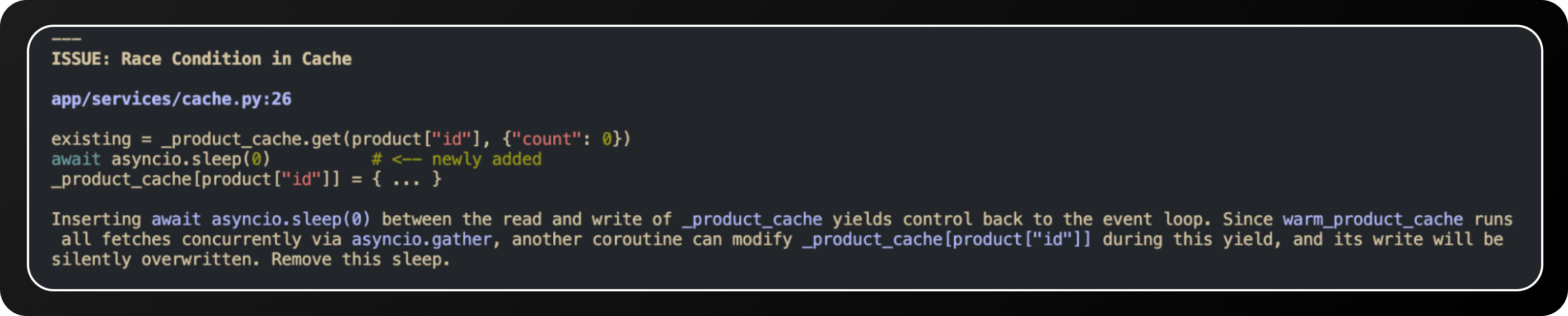

Flaw 2: Race condition in a cache-warming function

Two async tasks writing to the same dict without a lock. Fine in tests, fails under load.

/review: flagged it as a potential issue, suggested "consider using a lock."

/ultrareview: flagged it, showed the specific interleaving that produces a lost write, named the line where the race window opens.

Flaw 3: SQL injection via f-string interpolation

cursor.execute(f"SELECT * FROM users WHERE email = '{email}'"). Textbook.

/review: caught it.

/ultrareview: caught it, and flagged two other places in the same file using the same pattern, currently safe but fragile.

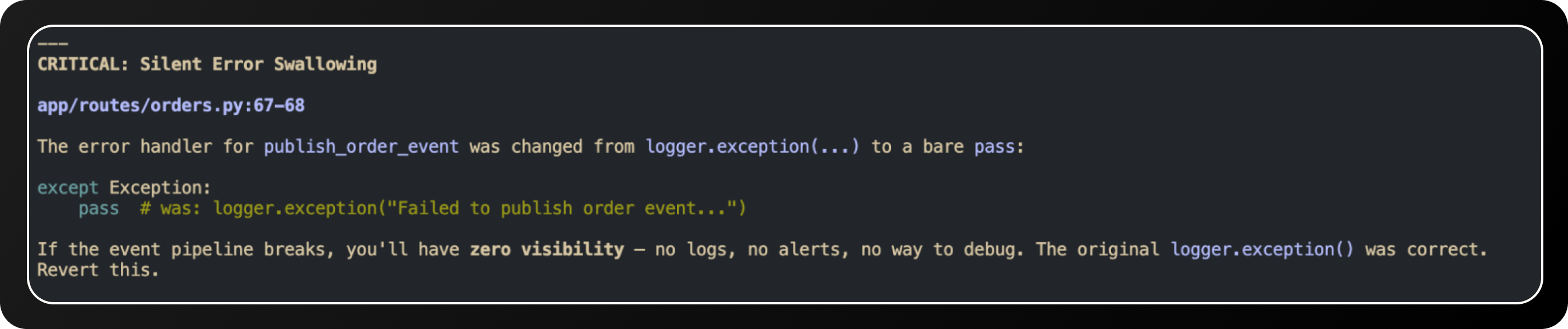

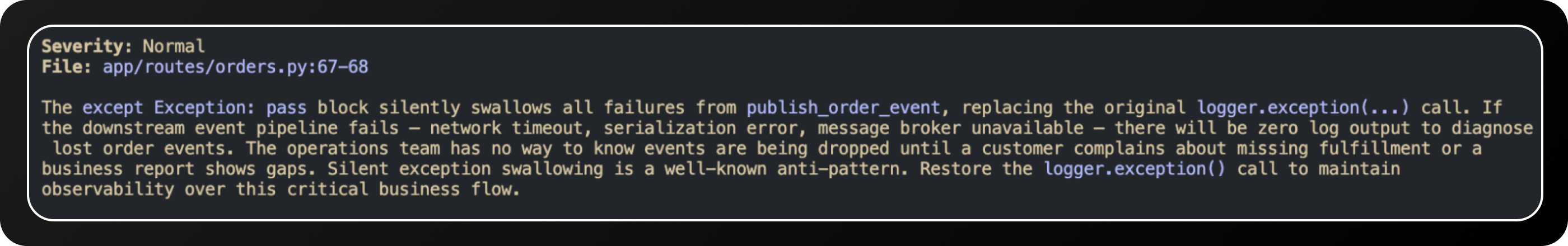

Flaw 4: Silent exception swallow in a background job

except Exception: pass around a Kafka producer call. Messages get dropped, nothing logs.

/review: missed it.

/ultrareview: caught it, flagged the specific production failure mode.

Final score:

/review caught 3 of 7.

/ultrareview caught 7 of 7 across two runs, (6 of them on the first pass).

Zero false positives from /ultrareview and two false positives from /review, both of which were just style flags.

My Take

Most AI review tools have a precision problem. You get 12 findings on a big PR, fix the obvious 3, skim the rest, and eventually stop trusting the tool.

/ultrareview only shows you findings it's already verified. Fewer results, but ones you can act on without spending half your day in triage.

That said, 5 to 10 minutes per review is a long time for small PRs, and 3 free runs per billing cycle gets expensive very fast if you're shipping a lot of branches.

Don't burn one on a 50-line typo fix.

It won't catch architectural problems or tell you if the abstraction is right. That's still on you.

I'd run it on anything touching auth, payments, migrations, or data handling. For config changes, small refactors, documentation, skip it.

Until next time,

Vaibhav 🤝🏻

If you read till here, you might find this interesting

#Partner 1

White Castle is Now Expanding at .001% the Cost

No, not with new brick-and-mortar stores. With robotic kiosks from ART.

ART, aka Automated Retail Technologies, makes serving food 24/7 possible for brands like White Castle at .001% the cost of a new brick-and-mortar.

Their robotic kiosks dish out customers favorite dishes, like warm White Castle sliders, on demand in seconds.

That makes entering new markets a three-step process. Plug it in. Stock it. Turn it on. And it isn’t just White Castle benefitting.

Other big-name food brands like Nestlé and Macaroni Grille partnered too, and foodservice behemoths like Sysco and Aramark also use it. Even better, until April 25, you can earn guaranteed bonus stock as an early-stage ART investor and share in their growth.

This is a paid advertisement for Automated Retail Technologies Regulation CF offering. Please read the offering circular at https://invest.automatedrt.com/

#Partner 2

A Senior Analyst Sees Half a Billion Dollar Potential.

Kingscrowd Capital's senior analyst reviewed RISE Robotics and projected potential growth to a $500 million valuation. The community round is open now on Wefunder. You don't have to be an institutional investor to get in at today's price.